Since the Great Recession, utility planners have consistently over forecasted peak and total load. Econometric models used in the past are failing: energy efficiency continues to decouple load growth from Gross Domestic Product and customer energy habits are changing.

Another factor contributing to inaccurate forecasting is the adoption of new, highly impactful DERs. And the costs can be high. According to methods laid out by NREL, inaccurate distributed PV adoption forecasting will cost a large, investor-owned utility up to $2.5 million per year in bulk capacity and generation costs alone.

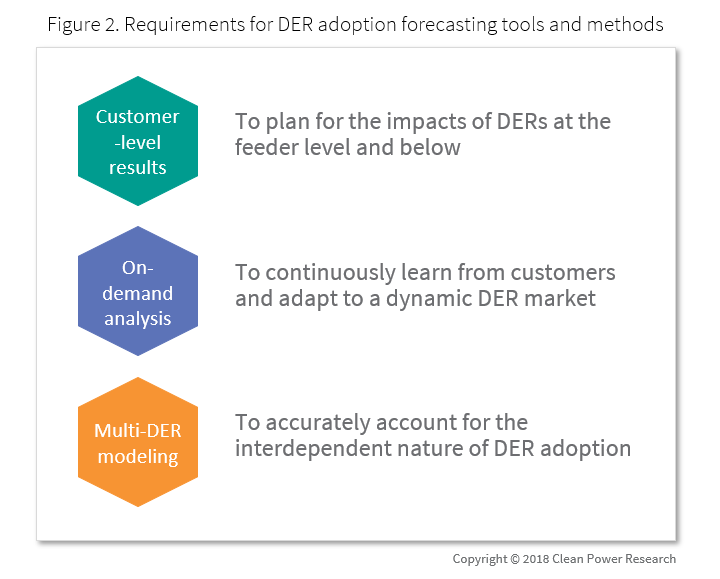

Today many utilities rely on simplistic approaches to forecasting DER adoption, with a few using more sophisticated models as a result of the pressure of increasing DER adoption. Most approaches, however, still leave utilities vulnerable to the high costs of poor forecasting. To properly address the high-cost risk of poor DER adoption forecasting, tools and methods need the following attributes:

- Customer-level results

- On-demand analysis

- Multi-DER modeling

In this article, we’ll dig into current approaches and highlight exciting new DER adoption forecasting methods to provide some clarity on the state of DER adoption forecasting.

Today’s common adoption forecasting methods

Simple

The simplest and most common approach to planning for DER adoption are stipulated and program-based. These methods assume that DER adoption will align with specific targets set by policy or program goals. For example: a renewable portfolio standard with a carve out for distributed PV, or a state policy goal to put a certain number of EVs on the road. This approach puts the utility at high risk since these targets are static and provide zero visibility into how adoption may be spread throughout a service territory.

Regression-based approaches such as linear regression or polynomial regression are slightly more sophisticated than stipulated methods. These approaches tune model parameters to match historic adoption, and then the tuned model is used to forecast new adoption. Due to a lack of customer-level data, regression-based forecasts tend to be applied to the service area level.

Advanced

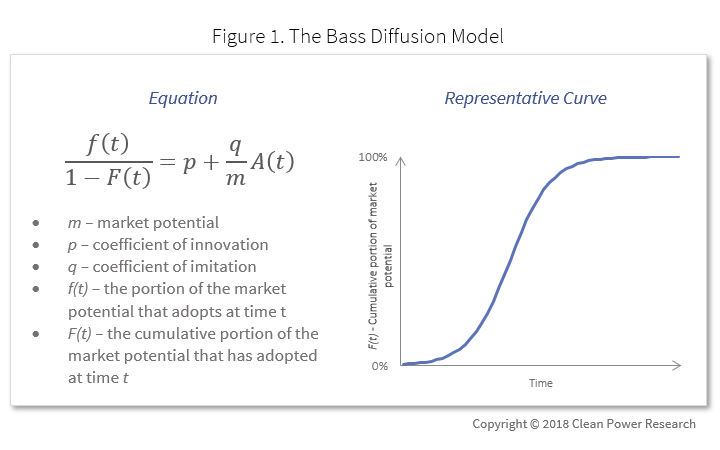

Many of the more sophisticated DER adoption models used by utilities today are based on the Bass Diffusion Model. This model has been validated and tested in many industries, and it’s generally accepted that the model is pretty accurate. It relies on the sociological theory that adoption of a new technology is a function of early adopters (innovators) influencing later adopters (imitators).

For DER adoption modeling, market potential m is usually a function of payback: how much solar or how many electric vehicles might be purchased if the payback period is 2 years? 5 years? 10 years?

Continuously updating forecast results on-demand using the Bass Diffusion Model is challenging, if not impossible. The relationship between m and payback is determined through survey-based studies that take significant time and manpower to complete.

Also, while the Bass Diffusion Model is useful for generating macro-level results, it is not able to account for the unique impact of many individual customer-level predictive variables like proximity to other adopters, home size, customer engagement data, etc. A multitude of customer-level data is required for producing very granular results.

The future

Advanced modeling and machine learning methods are receiving more and more attention as a solution to DER adoption forecasting. Discrete Choice Experiments, agent-based modeling, neural networks…we’ll delve deeper into how these methods and others can be applied to DER adoption forecasting in our soon-to-be-release whitepaper.

For today, key takeaways are that applying these advanced methods requires substantial data science expertise and implementing these models in a scalable way requires software engineering expertise on par with Microsoft, Google and Amazon.

A better way to forecast DER adoption

Back to our requirements for DER adoption forecasting tools and methods:

The newest software product from Clean Power Research, WattPlan® Grid, addresses these requirements. WattPlan merges advanced machine learning toolkits and customer-level big data with scalable cloud computing and a user-friendly interface. Today, the Sacramento Municipal Utility District, our partners in development, are using forecast scenarios from WattPlan Grid to guide their rate design and DER strategy. SMUD can forecast adoption propensity for over 600,000 customers in a few days.

Curious to learn more about neural networks and how cutting-edge techniques compare to today’s methods such as the Bass Diffusion Model? Interested in learning how you can optimize rate design, analyze “non-wires alternatives” and improve customer program targeting?

Stay tuned; our whitepaper “A better way to forecast DER adoption” will be out soon.